What is in our head before experience begins to shape it through learning? Humans seem to possess an implicit knowledge of physical objects as complete, connected, solid bodies that persist even when they are not visible and that maintain their identity through time. Humans also seem to implicitly know that certain kinds of objects (such as other people or other animals) exhibit actions that are special, in that they are directed towards goals. What is the origin of this implicit and intuitive core knowledge, the sort of things we know about the properties of physical objects (known as naive physics) and psychological objects (naive psychology)? Is it present from birth – a product of evolution – or is it acquired through experience?

Studies on this subject sometimes involve human newborns, though there are limitations to the kinds of research that can be done with them. This is partly because humans’ behavioural repertoire soon after birth is quite restricted. There are obvious ethical limitations as well: one would not think of raising human newborns under sensory deprivation, for example, in order to answer scientific questions. Using animal models in addition to human studies offers a way to address these limitations. When we’re studying the origins of knowledge, the ideal model should combine some degree of sensory and motoric abilities at birth (eg, eyes open, able to walk), to facilitate cognitive testing, in addition to the possibility of accurate control of what the animal experiences before and soon after birth (to better determine whether certain behaviour is innate or learned). Precocial animals such as ducklings or chicks give us such a model.

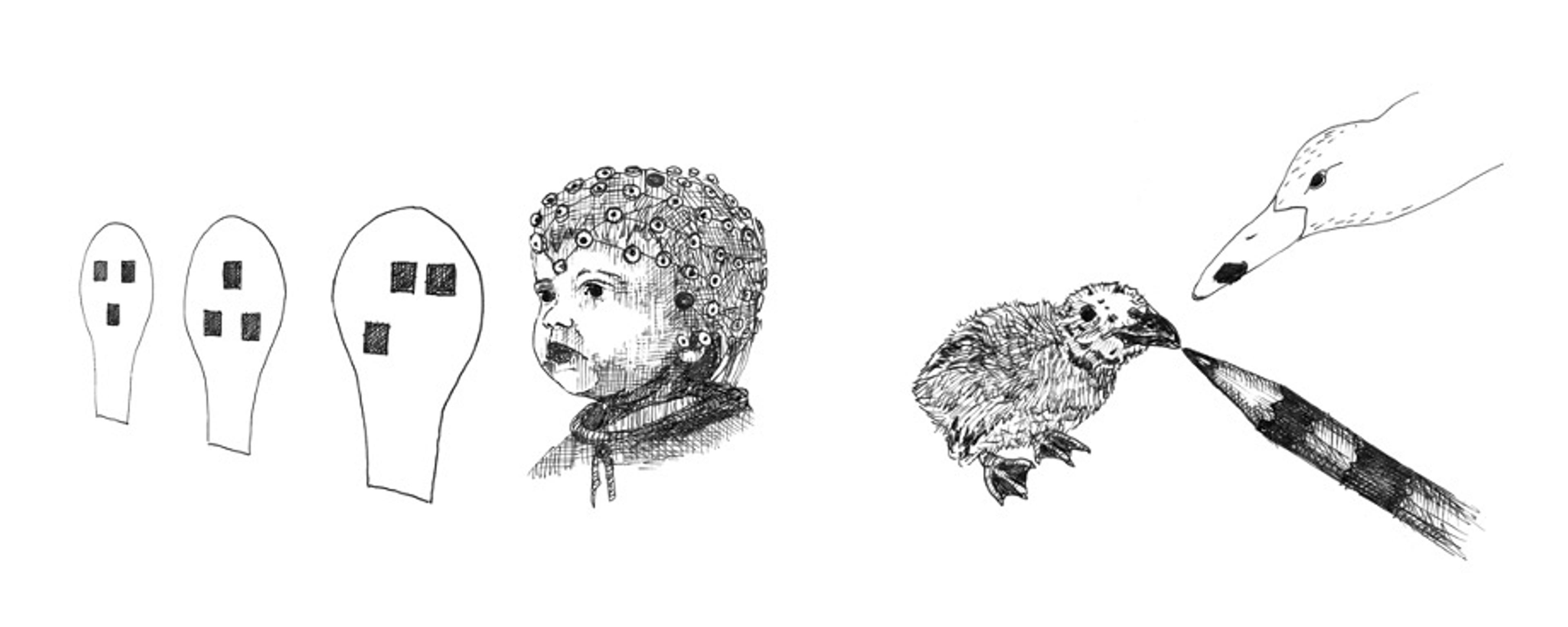

Chicks need to actively explore and learn about their environment from the moment they hatch. Therefore, they learn very rapidly to identify and attach to their mother and siblings. For a long time, this imprinting process has been considered a form of exposure learning that is devoid of any constraints. In 1935, Martina the goose famously imprinted on the zoologist Konrad Lorenz – a very implausible mother. However, more recent research has shown that newly hatched chicks are not tabulae rasae: they hatch with predispositions to attend to, and thus to imprint on, particular kinds of stimuli. Before they have ever seen a mother hen, chicks prefer to approach objects that roughly resemble the shape of a mother hen, as originally shown by the neurobiologist Gabriel Horn and his colleagues. In my lab, we found that the configuration of the mother’s head features can be reduced to just an ovoid outline that contains three high-contrast blobs arranged as an inverted triangle – a sort of emoticon with eyes and beak/mouth.

The same kind of preference was subsequently discovered in human neonates, as the cognitive neuroscientist Mark Johnson and colleagues showed, based on the newborns’ eye gaze. Recently, my team used EEG to measure electrical activity in the brains of newborns as they saw face-like, inverted face-like, or scrambled face-like configurations, and we found impressive selectivity of response to the first pattern. That is, we observed a significantly stronger change in our measure of cortical activity in response to the upright face-like stimuli, as compared with the other stimuli. We also revealed the involvement of cortical areas that overlap with the adult face-processing circuit. Our findings suggest that the cortical route specialised for face processing is already functional at birth.

Of course, given that the sights and sounds these human infants are exposed to are not controlled immediately after birth (as they can be in the case of chicks), one could argue that quick learning is taking place – namely that just a few hours of exposure to the real faces of the mother and other human beings might suffice to trigger the preference. But I doubt this is so, for we found that the preference is stronger for younger babies than for older ones. Our babies were 70 hours old on average, which means some of them were a few hours old at the time of testing while others were older. The fact that longer exposure to natural faces seemed to, if anything, decrease the preference for face-like stimuli suggests that the preference is elicited based on an abstract template of a face, devoted to attracting the attention of newborns towards this class of stimuli.

Drawings by Claudia Losi from the book Born Knowing (2021) by Giorgio Vallortigara, published by MIT Press.

These findings make clear the importance of parallel studies in the nonhuman animal model and in humans. Human studies provide evidence that the mechanisms for attending to face-like stimuli are at work in the newborns of our species. And the chick studies provide evidence that animals can possess these mechanisms from the very beginning of life.

At roughly the same time that Horn discovered the preference of newly hatched chicks for the head region of the mother hen, I was dwelling on another issue: are chicks attracted by any kind of motion, or do they prefer the motion of their own species for imprinting? With my collaborators, I used what are called ‘point-light displays’, stimuli in which only small points of light – representing the joints of an animal – are visible, which disentangles purely motion-related information from shape-related information. Chicks did prove to have a preference for biological motion, but not a species-specific one. For instance, they were ready to approach a display resembling a moving cat (or any other creature), though not a bunch of points of light moving randomly or rigidly.

In further fascinating research, the developmental psychologist Francesca Simion used our stimuli – point-light displays representing walking chickens – and found, by measuring looking times, that human newborns exhibit a similar preference for biological motion. Note that these babies did not have any experience with moving chickens in the few hours before the test! These results seem to suggest that the ability to recognise biological motion is independent of experience, just as the same seems to be true for the basic ability to recognise face-like stimuli.

It’s interesting to note that these life-detecting abilities appear to wax and wane as babies age. For instance, the preference for biological motion seems to vanish at one and two months of age in human infants, and then to reappear by three months. A likely explanation is that, at birth, animals possess innate mechanisms that act in a reflex-like manner, serving to direct their attention to relevant stimuli in the environment, such as caregivers. Then a second mechanism, based on learning, might take precedence, allowing more specific recognition – ie, the face of Mom as opposed to a stranger, or the movement of one’s own species as opposed to generic biological motion, and so on.

The existence of these mechanisms at birth should influence our understanding of the origins of knowledge, for it clearly challenges the view of our newborn minds as blank slates. This is true for human babies as well as other species. Most young vertebrates appear to be endowed with general mechanisms to detect, at birth, the presence of animate creatures. The ‘animacy detectors’ for which we have found evidence extend to a preference for self-propelling objects, as opposed to inert objects that move after being hit by another object. Human neonates and newly hatched chicks have also demonstrated preferences for objects moving with abrupt changes in speed, and for objects that move along their major (anterior-posterior) body axis – all these characteristics, again, being distinctive features of animate entities.

With newly hatched chicks, we have been able to search for where in the brain these animacy detectors reside, at a level that would be impossible with human subjects. By analysing the expression of so-called early genes (which guide the production of certain proteins that can be made visible by colouring them in the cells) we were able to identify tiny regions selectively involved in the preference for face-like stimuli (the nucleus taenia, which is homologous to the lateral part of the mammalian amygdala) and for changes of speed (the lateral septum, a brain area that has remained mostly the same in different classes of vertebrates such as mammals and birds).

The animacy detectors we have identified so far can be considered as the most basic bricks for building up a social brain. The similarities between babies and chicks suggest that these detectors are evolutionarily old, possibly inherited by early social vertebrates. They allow young organisms to learn quickly by canalising their interest towards those portions of the environment that are most important for survival. Consider a young chick out of the egg: there are a variety of moving things around, and, if it were expected to learn simply through exposure, the chance of errors and wasted time would be huge. Instead, it seems that animacy detectors direct the animal’s attention towards things that move semi-rigidly and with abrupt changes of speed, thus favouring learning by imprinting on biological things (hopefully, the mother hen and siblings, and not a passing cat). Similarly, it would take too much time and risk to learn purely through trial and error what is a face and what is not. Thus, organisms are likely endowed by natural selection with a mechanism that directs their attention to face-like stimuli.

Drawing by Claudia Losi from the book Born Knowing (2021) by Giorgio Vallortigara, published by MIT Press.

What if these animacy detector mechanisms were for some reason diminished or delayed? We know of developmental disorders with genetic bases that might represent such conditions. Autism spectrum disorders (ASDs) are an example. The diagnosis of ASD cannot be performed before two to three years of age, and we know that an early diagnosis would be extraordinarily valuable, enabling prompt psychological and social interventions. Differences in animacy detection could potentially be of aid in such a diagnosis. Using the behavioural techniques we developed for animacy detection, we tested newborns with an increased likelihood of ASD (ie, those who have an older sibling diagnosed with ASD). We found that these newborns appeared to have deficits in animacy detectors for face-like and biological-motion stimuli, based on looking time. It seems likely that there is a delay in their appearance, as some evidence for canonical preferences does emerge in these babies, but later on, at about four months. A delay in the development of animacy detectors could potentially affect the mutual interaction between an infant and a mother, but more research would be needed to test that hypothesis.

A reasonable interpretation of all these findings is that, although babies can certainly learn a lot about their environment by interacting with objects, both physical and social, they seem to be aided in doing so by inborn mechanisms that guide and constrain their inferences – a form of implicit knowledge about the world. In the absence of inborn guiding mechanisms, learning would likely take vastly too long. So we should expect the operation of such mechanisms from the time of birth, and more of them in those species – such as humans – that depend so deeply on learning.