Taking a final glance at your partner, you turn away, close your eyes, and cross your arms over your chest. With a deep breath, you exhale and slowly shift your weight backwards. Gravity overpowers your usual state of balance and you begin to tip back. At that crucial moment between standing and falling, do you bail and try to regain your balance? Or do you surrender to the fall, trusting that your partner will catch you?

This is the ‘trust fall’, a common way of building a relationship between people: I fall and, based on the way you catch me, I can trust you. Success requires a relationship between the faller and the catcher, as well as true, absurd vulnerability from each. That is, the faller needs to, simultaneously, feel she is falling and that she will be caught in order to fully inhabit the exercise. This is what we call an absurdity: holding two disjointed ideas to be simultaneously and equally true. The faller can’t simply look like they’re falling, they must be out of control, they must abandon the resources at their disposal (like catching themselves with a backward step) and genuinely drop backwards – knowing that no harm will come.

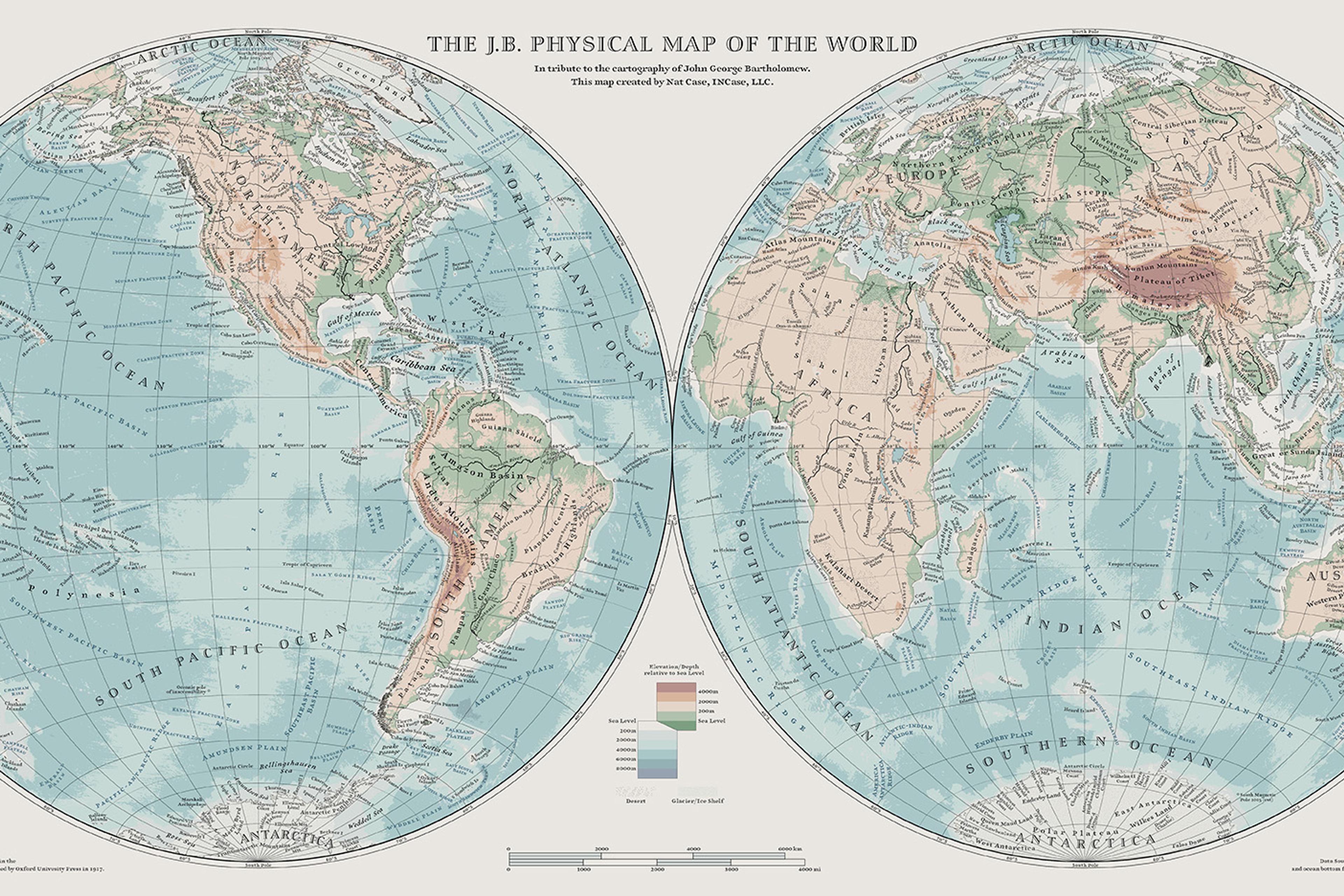

Ilya Vidrin and Orlando Reyes in Ilya Vidrin’s Attunement Or That Which Cannot Be Measured Courtesy the author/New Museum, NYC. Photo credit Lo Kuehmeier

Trust between humans relies on a frail and strange notion of shared experience, absurdity and vulnerability. Indeed, it’s surprisingly difficult to articulate exactly what trust is. One way of demonstrating that difficulty is to see if trust can be simulated – by robots. Attempting to turn trust into a strictly rational process demonstrates how hard it is to say just what’s going on when one person trusts another. So what happens when we ask ourselves and others to trust – or not trust – a machine, particularly one with some level of autonomy? What’s necessary for a robot to do a trust fall?

Trust is more than merely an on/off mechanism of trust or no trust. Roderick Kramer, a social psychologist, distinguishes between two types of trust: presumptive and tempered. Presumptive trust is the way that individuals operate from expectations about how they think an interaction should or ought to occur. It’s the ongoing assumption that people can, more or less, trust one another. ‘In many ways,’ Kramer wrote in 2009, ‘trust is our default position; we trust routinely, reflexively, and somewhat mindlessly across a broad range of social situations.’

Tempered trust, on the other hand, is when individuals actively monitor and assess possible interactions in the immediate environment. This is where authentic, full-bodied trust occurs. This is trust between two individuals, a kind of trust that needs to be cared for and developed; tempered trust requires continuous negotiation.

In our work, we adopt a choreographic lens in order to examine the embodied features of trust, especially in relation to automation and robotics. Let’s look at the distinction between presumptive and tempered trust in the case of trust falls. A true trust fall requires holding two counterfactual states in simultaneous, sincere belief, a state of absurdity: I am falling but I am safe. Indeed, the absurdity is exacerbated by the fact that the act of falling places oneself at risk. In that sense – by entering a state of absurdity and wilfully risky behaviour – it’s an illogical thing to do. That’s why a trust fall can be experienced as a moment of wild abandon, in which individual livelihood is tossed aside in search of a trusting relationship with another person. Falling requires the utmost confidence in another to catch you and keep you safe. This is tempered trust, constructed through the experience of risk, and this is the kind of trust we’re searching for in robot trust falls.

It’s important to note, further, that genuine tempered trust isn’t possible if it’s unidirectional. Take the example of catching a plate. Sensing that a plate is falling, I lean forward to catch it. Success in catching might lead to a sense of accomplishment – I saved the plate! – but the action hasn’t served to build trust between me and the plate. So what about between me and a robot?

If a robot were designed to do a trust fall, it might have the capacity to stop its own fall. Automation can animate such a behaviour: the robot could look like it’s about to fall into your arms but at the last second stop itself or proceed with the fall. However, the mechanisms that drive automation have their limits. While automated devices often outperform their human counterparts (eg, excelling in magnitude and precision of force and velocity), these are not the aspects of embodiment that are required to develop a trusting physical relationship. Certainly, the hope for, say, autonomous vehicles is to reduce the human error that causes the untimely deaths of passengers, not recreate the current state of dangerous roadways. So the question, now, is: can we automate the simultaneity of falling and faith in being caught in embodied devices? Can we trust robots?

If we want to recreate the bidirectional trust behaviour in a machine, we need to represent the required behavioural structure with logic. Logic allows us to say things such as ‘If this is true, do that’ or ‘Until this condition is met, don’t do this’ or even ‘Always do this.’ But can it capture the structure of a full-bodied participant in an active, trusting relationship, particularly as found in the physicality of the act of performing a trust fall with a partner? If we could automate this process, uncovering the mechanisms that portend trusting our fellow humans, could we learn to have better relationships with each other? Even though it looks unlikely, a careful, sceptical attempt is worthwhile.

In manufacturing a robot trust fall, we could assign computational states that engage a machine’s sensors and actuators to stabilise or destabilise the device. We then note that in the stabilise state the machine is ‘not falling’, and in the destabilise state the machine is ‘falling’. What we can’t do is find a machine action that coordinates with its programming such that ‘falling’ and ‘not falling’ are simultaneously satisfied (without making a machine that fails to ever function). In a computer, absurdity doesn’t compile – to inhabit an absurd state requires that a system resolves paradoxes. Either we create a logic that allows the machine to inhabit ‘falling’ all the time or we create a state that’s associated with a distinct idea of ‘falling but safe’ rather than ‘falling’ and ‘not falling’. It’s a simulation of vulnerability but it’s not a true enactment of feeling, simultaneously, that you’re falling and that you’ll be caught.

When we apply words such as ‘trust’ to our tools, we reduce important dimensions (eg, physicality, affect, absurdity) of our understanding of trust, interpersonal relationships and embodiment. A simulated thing can be useful, but it’s not the real thing. Whether a simulated thing is ‘true’ or ‘authentic’, a deeper concern is that we can simulate trust but we can’t create it. With that understanding, when we act from presumptive trust, we make different decisions with tools and form different expectations of performance. Approaching robots as embodied agents that simulate aspects of trust could help humans create active, discerning relationships with automation rather than presumptively, mindlessly trusting the machine to do its job.